SD-WAN has exploded in the market. Everywhere I turn, I see companies touting their new strategy for reducing WAN complexity, encrypting data in flight, and even doing analytics on traffic to help build QoS policies and traffic shaping for critical links. The first demo I ever watched for SDN was a WAN routing demo that chose best paths based on cost and time-of-day. It was simple then, but that kind of thinking has exploded in the last 5 years. And it’s all thanks to our lovable old friend, Ethernet.

Those Old Serials

When I started in networking, my knowledge was pretty limited to switches and other layer 2 devices. I plugged in the cables, and the things all worked. As I expanded up the OSI model, I started understanding how routers worked. I knew about moving packets between different layer 3 areas and how they controlled broadcast storms. This was also around the time when layer 3 switching was becoming a big thing in the campus. How was I supposed to figure out the difference between when I should be using a big router with 2-3 interfaces versus a switch that had lots of interfaces and could route just as well?

The key for me was media types. Layer 3 switching worked very well as long as you were only connecting Ethernet cables to the device. Switches were purpose built for UTP cable connectivity. That works really well for campus networks with Cat 5/5e/6 cabling. Switched Virtual Interfaces (SVIs) can handle a large amount of the routing traffic.

For WAN connectivity, routers were a must. Because only routers were modular in a way that accepted cards for different media types. When I started my journey on WAN connectivity, I was setting up T1 lines. Sometimes they had an old-fashioned serial connector like this:

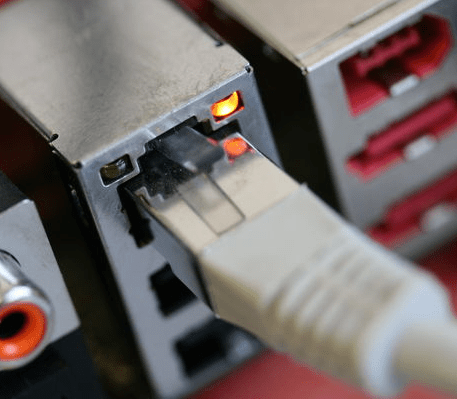

Those connected to external CSU/DSU modules. Those were a pain to configure and had multiple points of failure. Eventually, we moved up in the world to integrated CSU/DSU modules that looked like this:

Those are really awesome because all the configuration is done on the interface. They also take regular UTP cables instead of those crazy V.35 monsters.

But those UTP cables weren’t Ethernet. Those were still designed to be used as serial connections.

It wasn’t until the rise of MPLS circuits and Transparent LAN services that Ethernet became the dominant force in WAN connectivity. I can still remember turning up my first managed circuit and thinking, “You mean I can use both FastEthernet interfaces? No cards? Wow!”.

Today, Ethernet dominates the landscape of connectivity. Serial WAN interfaces are relegated to backwater areas where you can’t get “real WAN connectivity”. And in most of those cases, the desire to use an old, slow serial circuit can be superseded by a 4G/LTE USB modem that can be purchased from almost any carrier. It would appear that serial has joined the same Heap of History as token ring, ARCnet, and other venerable connectivity options.

Rise, Ethernet

The ubiquity of Ethernet is a huge boon to SD-WAN vendors. They no longer have to create custom connectivity options for their appliances. They can provide 3-4 Ethernet interfaces and 2-3 USB slots and cover a wide range of options. This also allows them to simplify their board designs. No more modular chassis. No crazy requirements for WIC slots, NM slots, or any other crazy terminology that Cisco WAN engineers are all too familiar with.

Ethernet makes sense for SD-WAN vendors because they aren’t concerned with media types. All their intelligence resides in the software running on the box. They’d rather focus on creating automatic certificate-based IPsec VPNs than figuring out the clock rate on a T1 line. Hardware is not their end goal. It is much easier to order a reference board from Intel and plug it into a box than trying to configure a serial connector and make a custom integration.

Even SD-WAN vendors that are chasing after the service provider market are benefitting from Ethernet ubiquity. Service providers may still run serial connections in their networks, but management of those interfaces at the customer side is a huge pain. They require specialized technical abilities. It’s expensive to manage and difficult to troubleshoot remotely. Putting Ethernet handoffs at the CPE side makes life much easier. In addition, making those handoffs Ethernet makes it much easier to offer in-line service appliances, like those of SD-WAN vendors. It’s a good choice all around.

Serial connectivity isn’t going away any time soon. It fills an important purpose for high-speed connectivity where fiber isn’t an option. It’s also still a huge part of the install base for circuits, especially in rural areas or places where new WAN circuits aren’t easily run. Traditional routers with modular interfaces are still going to service a large number of customers. But Ethernet connectivity is quickly growing to levels where it will eclipse these legacy serial circuits soon. And the advantage for SD-WAN vendors can only grow with it.

Tom’s Take

Ethernet isn’t the only reason SD-WAN has succeeded. Ease of use, huge feature set, and flexibility are the real reasons when SD-WAN has moved past the concept stage and into deployment. WAN optimization now has SD-WAN components. Service providers are looking to offer it as a value added service. SD-WAN has won out on the merits of the technology. But the underlying hardware and connectivity was radically simplified in the last 5-7 years to allow SD-WAN architects and designers to focus on the software side of things instead of the difficulties of building complicated serial interfaces. SD-WAN may not owe it’s entire existence to Ethernet, but it got a huge push in the right direction for sure.