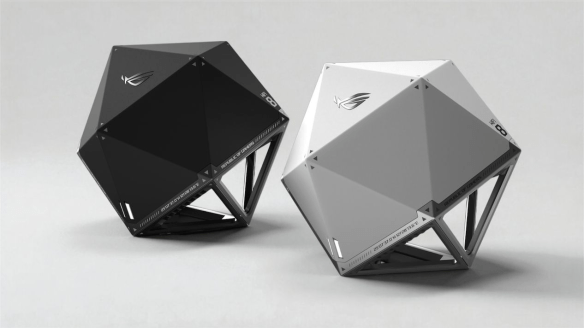

The ASUS ROG Wi-Fi 8 AP

Did you see the big news from CES? Wi-Fi 8 is here! Broadcom was talking about it. ASUS rolled out a d20 Wi-Fi 8 AP. MediaTek made sure they had one too. I guess I should probably take down all these Wi-FI 7 APs and just install the new stuff, right?

Nuts and Bolts

Before my favorite people start jumping out there to talk about how the newest iPhone and Samsung Galaxy phones are trash because they don’t support Wi-Fi 8, we need to make sure we’re all on the same page here. The standard behind the Wi-Fi 8 marketing term, 802.11bn, is a proposed working standard. It’s not finalized yet. It’s still in the draft phases, with final standards approval not expected until September 2028.

The focus of 802.11bn is not speed. It’s reliability. When you look at the way that vendors have been pushing more and more throughput for the past several revisions you might ask yourself how much faster things could get. For the eighth release of the Wi-Fi standard the answer is “not any faster.” Wi-Fi 8 is keeping the same speed numbers that Wi-Fi 7 has right now. Instead, the developers are looking to add features like reduced power consumption, extended range, and quality of service (QoS) enhancements.

The goals are important because speed isn’t going to solve all your problems. Faster doesn’t mean better when you’re dropping too many packets or your streaming video gets caught behind file transfers like patches to your Playstation games. Likewise, the extended range capabilities are designed to keep asymmetric connections from causing issues when your upload is paltry compared to the downlink. Despite the marketing behind “bigger number means better”, having a new protocol focused on consistency over expansion is important. Look no further than OS X Snow Leopard for the value of a maintenance release focused on fixing problems over creating new ones.

Carts and Horses

So, why are we talking about Wi-Fi 8 right now? What is it about the industry that gets people so excited about tech that is barely baked at this point? If you’re already feeling like you are falling behind on the treadmill of upgrades you’re not alone. I would gather that my readers here aren’t the ones that are the target market for these announcements.

Where did these announcements happen? CES isn’t known as a hotbed for enterprise technology. Aside from the crazy focus on AI this year you’d be hard pressed to find firewalls and SANs and cloud computing advancements at CES. However, wireless technology crosses both markets. The same Wi-Fi you use in your enterprise works at your house too. And the real target market for these announcements is the gamer market.

How often do you upgrade your home wireless? I’d venture it’s not on the same 3-5 year cycle that your enterprise APs are on. I’d wager your upgrade cycle is more replacement of broken gear over exciting new features. That’s hard for manufacturers to predict. They like consistent cycles because their investors like consistent payouts. That means they need to get you to upgrade more often. If your devices are also on a similar upgrade trajectory you’re likely not going to be upgrading soon. Why upgrade to a Wi-Fi 7 AP when my laptop only supports Wi-Fi 6?

ASUS and MediaTek really want you to jump now. Sure, the gear is pre-pre-standard. Yeah, the advances aren’t totally baked right now and all that fancy QoS to prioritize streaming video is going to get marked down at the provider edge. What’s really important is that you buy it now because they need your cash to keep the product lines moving. Research and Development isn’t easy or cheap. And buying a new polyhedral AP means they can keep developing it when the standard inevitably changes later this year. More importantly, if you buy a pre-standard product in the consumer space now you can guarantee you’re not going to get an upgrade path to make it standard in two years. What you get instead is a device that’s cheap enough to replace in 2029 when the standard is finalized and devices are actually supporting those fancy new features in the protocol. The vendors get to double dip into your wallet.

Tom’s Take

Unless you’re buying one of these things for a review unit, don’t buy Wi-Fi 8. You don’t need it. It’s not ready. More importantly, not buying these units now when they are barely out of alpha means that companies, especially MediaTek, need to learn to put some more effort into the product instead of just trying to get you to jump at the newest biggest number. That’s just for the consumer side of the house. Please do not buy this to put into an enterprise, no matter how much your CEO begs you to do it for their office. You’re rewarding bad behavior and bad marketing. Take a little joy in making your executives wait for a technology to be fully baked before you implement it.