Innovation is key in our lives. It allows us to go from adding on our fingers to having a calculator capable of solving quadratic equations. It shows that we can graduate from playing checkers all the way up to tossing disaffecting fowl at inferior porcine construction workers. Innovation, however, is hard work. It requires significant time and investment to realize anything good. Helen Hanson said, “Inspiration is the windfall from hard work and focus.” However, in the tech arena, innovation doesn’t always seem to come from hard work. I always like to think of the source of tech innovation as coming from three different options: building it, buying it, or b*tching about it.

Building It – This one is the classical source of inspiration. Look at companies like Selsius developing CallManager, or Cognio’s spectrum analyzer chips. More often than not, these companies are lead by brilliant individuals with a kind of hyper focus that allows them to shut out all distractions and build the next better widget. Ryan Woodings of MetaGeek is a great example. He spent lots of time and hard work analyzing a need and creating a brilliant product to address it. Companies that go out on a limb to build better widgets help make the world better with new ideas to address our needs and desires. The only problem with this kind of development is the amount of time it takes to come up with these ideas. You must be willing to invest a large amount of resources to achieve your goals. How many inventors and innovators have gone broke trying to realize their dreams? Even Ryan had to struggle with keeping his day job until MetaGeek took off and became lucrative enough to be his new day job. Large companies like Cisco and Juniper suffer from the same problem on a different scale. They must sink huge budgets into research and development in order to create new products. Sometimes, the people in charge don’t take well to R&D budgets spiraling out of control, so they cut back and risk stifling innovation. You also run into issues with your company being brilliant, but unknown. How many times have you heard the phrase, “The best company no one’s ever heard of.”? Chances are the kind of company that has brilliant people with an acute ability to focus on product innovation may not have the vision to tell people about their wonderful new widget. If that happens, they may wither and die before they get famous because no one knew who they were and how great their product was. In many cases, this leads to…

Buying It – If you don’t have a particularly inspirational idea or an innovative team to design something new, but you happen to be sitting on a pile of cash the size of a dump truck, you always have the option of buying innovation. This isn’t always a bad thing. If you have a company that has a great product and no exposure, you can take your money and invest it in the technology and people and market it yourself. It’s also faster in a lot of cases to purchase the time and effort that someone else has put in rather than reinventing the wheel. John Chambers at Cisco has a philosophy that if Cisco can’t be the best in a market, they’ll acquire the first or second place company and make them the best. Cisco bought Selsius and Cognio and Profigo and Protego and a whole host of other companies. HP has purchased Colubris and 3COM and 3Par. Microsoft recently purchased Skype. Even Juniper has purchased Trapeze. Acquisitions happen all the time. At one point, Cisco was even funding engineers to branch out and start their own companies to perform research and development into new product lines. If they made good, Cisco would come back and buy the company and rehire the old engineer as the new director of that particular product’s development. That’s not to say that buying your R&D is always the best method. Eventually, you will run out of cash to buy companies, and then your research and innovation goes kaput. Many of the best companies are just too big to swallow, no matter how much money you have to buy them. It’s also very tough to integrate the development teams from purchased assets into your fold. How many time do you see a company be purchased, then have the lead team leave six to eight months later? People who are used to having total control over the entire creative process sometimes don’t take kindly to corporate oversight. The have a difference of opinion and out the door they go, ostensibly to form a new company around the same idea.

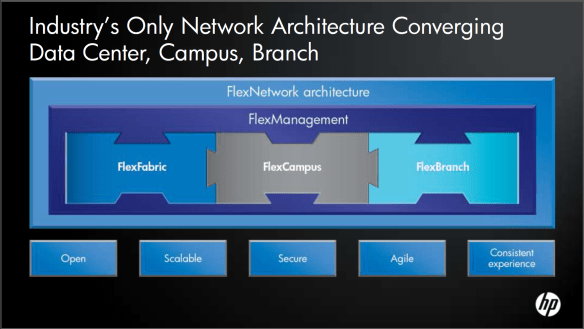

B*tch About It – I’m seeing this becoming a bigger and bigger method of innovation recently. It seems that when a company comes up with an idea, a competitor does their best to knock it down several pegs. Whether it be to buy time to bring their own strategy to market or to pitch an idea with a totally different product, the idea is that by complaining (often loudly), you can force your people to innovate while making sure the other guys can’t sell their widgets. For a particular example, I’m going to pick on TrILL. Right now, TrILL isn’t a standard. Other companies, like Cisco and Brocade, have come out with proprietary features that function much like TrILL eventually will, but don’t totally interoperate. Others have decided to do something totally different, like Juniper or HP. Right now, the battle of PR is being waged by Juniper in regards to their QFabric being totally different that TrILL or Cisco’s FabricPath. HP is waging a PR war against Cisco from the standpoint that FabricPath is not standards-based, while HP is content to wait for TrILL to become ratified and rely on their own proprietary IRF solution in the interim. To me, the innovation here isn’t so much centered around what any one particular company is capable of, but rather what there competitors are incapable of doing. FabricPath isn’t a standard. IRF doesn’t scale to the Nth node. QFabric is currently a pipe dream without substance. Every vendor is guilty of attacking their competitors rather than extolling the virtues of their particular solution. If you spend your time telling me what your product does rather than concentrating on what your competitor’s product doesn’t do, you are much more likely to convince me to buy your widget, or so the thinking goes.

Tom’s Take

Creation and innovation take resources. Plain and simple. You’re either going to use brainpower to invent something, money to buy something, or hot air to talk about how much something sucks. I love people that are creative enough to think of things that no one else has thought of before. Late night television is littered with them. Others aren’t as creative, but have the resources to make those creative people prosper in a great environment. These kinds of innovators are tried and true and have my utmost respect. The last group, the whiners and complainers, not so much. The amount of effort it takes to sell me on the idea that someone else’s hard work isn’t up to snuff could be better directed in developing new products or ideas. That negativity can be turned into hugely positive things if only we take the time to focus. I make an effort to stop presenters once their message becomes all about how something isn’t great and make them tell me about how great their thing is instead. You quickly see who the true innovators are. Real innovators are proud of their accomplishments and won’t hesitate to talk about them. The whiners and complainers fall apart when they aren’t allowed to use negativity to sell themselves. Think about that the next time you get ready to innovate.