All of this has happened before. All of this will happen again.

The more time I spend listening to storage engineers talk about the pressing issues they face in designing systems in this day and age, the more I’m convinced that we fight the same problems over and over again with different technologies. Whether it be networking, storage, or even wireless, the same architecture problems crop in new ways and require different engineers to solve them all over again.

Quality is Problem One

A chance conversation with Howard Marks (@DeepStorageNet) at Storage Field Day 4 led me to start thinking about these problems. He was talking during a presentation about the difficulty that storage vendors have faced in implementing quality of service (QoS) in storage arrays. As Howard described some of the issues with isolating neighboring workloads and ensuring they can’t cause performance issues for a specific application, I started thinking about the implementation of low latency queuing (LLQ) for voice in networking.

LLQ was created to solve a specific problem. High volume, low bandwidth flows can starve traditional priority queuing systems. In much the same way, applications that demand high amounts of input/output operations per second (IOPS) while storing very little data can cause huge headaches for storage admins. Storage has tried to solve this problem with hardware in the past by creating things like write-back caching or even super fast flash storage caching tiers.

Make It Go Away

In fact, a lot of the problems in storage mirror those from networking world many years ago. Performance issues used to have a very simple solution – throw more hardware at the problem until it goes away. In networking, you throw bandwidth at the issue. In storage, more IOPS are the answer. When hardware isn’t pushed to the absolute limit, the answer will always be to keep driving it higher and higher. But what happens when performance can’t fix the problem any more?

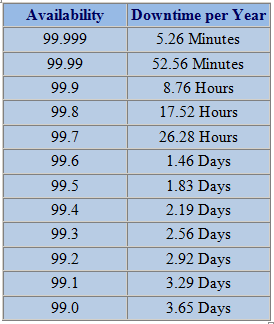

Think of a sports car like the Bugatti Veyron. It is the fastest production car available today, with a top speed well over 250 miles per hour. In fact, Bugatti refuses to talk about the absolute top speed, instead countering “Would you ask how fast a jet can fly?” One of the limiting factors in attaining a true measure of the speed isn’t found in the car’s engine. Instead, the sub systems around the engine being to fail at such stressful speeds. At 258 miles per hour, the tires on the car will completely disintegrate in 15 minutes. The fuel tank will be emptied in 12 minutes. Bugatti wisely installed a governor on the engine limiting it to a maximum of 253 miles per hour in an attempt to prevent people from pushing the car to its limits. A software control designed to prevent performance issues by creating artificial limits. Sound familiar?

Storage has hit the wall when it comes to performance. PCIe flash storage devices are capable of hundreds of thousands of IOPS. A single PCI card has the throughput of an entire data center. Hungry applications can quickly saturate the weak link in a system. In days gone by, that was the IOPS capacity of a storage device. Today, it’s the link that connects the flash device to the rest of the system. Not until the advent of PCIe was the flash storage device fast enough to keep pace with workloads starved for performance.

Storage isn’t the only technology with hungry workloads stressing weak connection points. Networking is quickly reaching this point with the shift to cloud computing. Now, instead of the east-west traffic between data center racks being a point of contention, the connection point between the user and the public cloud will now be stressed to the breaking point. WAN connection speeds have the same issues that flash storage devices do with non-PCIe interfaces. They are quickly saturated by the amount of outbound traffic generated by hungry applications. In this case, those applications are located in some far away data center instead of being next door on a storage array. The net result is the same – resource contention.

Tom’s Take

Performance is king. The faster, stronger, better widgets win in the marketplace. When architecting systems it is often much easier to specify a bigger, faster device to alleviate performance problems. Sadly, that only works to a point. Eventually, the performance problems will shift to components that can’t be upgraded. IOPS give way to transfer speeds on SATA connectors. Data center traffic will be slowed by WAN uplinks. Even the CPU in a virtual server will eventually become an issue after throwing more RAM at a problem. Rather than just throwing performance at problems until they disappear, we need to take a long hard look at how we build things and make good decisions up front to prevent problems before they happen.

Like the Veyron engineers, we have to make smart choices about limiting the performance of a piece of hardware to ensure that other bottlenecks do not happen. It’s not fun to explain why a given workload doesn’t get priority treatment. It’s not glamorous to tell someone their archival data doesn’t need to live in a flash storage tier. But it’s the decision that must be made to ensure the system is operating at peak performance.